Prologue: the media problem in Minecraft

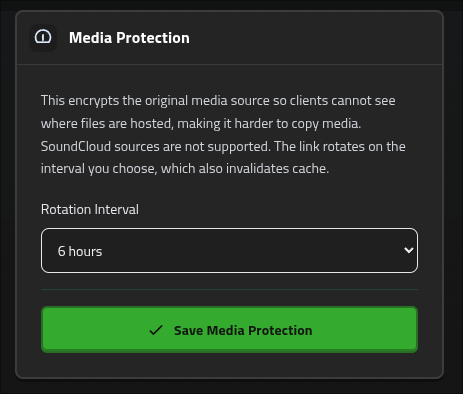

OpenAudioMc is used by thousands of Minecraft comunities to provide Proximity VoiceChat and Music Playback. It was one of the first public services to provide a solution for audio in Minecraft, which is actually a lot more difficult than it sounds.

The game itself allows you to include custom audio files in whats called a "Resource Pack", but this requires the client to download these files in advance and keep all of them in memory, which doesn't scale at all. OpenAudioMc instaed provides a solution where a web-based client can stream audio from any URL, which allows for much more flexibility and scalability. And this is a solution that my users have been enjoying for almost 10 years now.

ImagineFun is a Minecraft community that has been using OpenAudioMc for a long time, and they have a very large server with thousands monthly players, where I also just happen to work. We've recently been faced with some users trying to piggyback of our CDN, which shined a light on what turned out to be a broader issue with OpenAudioMc.

See, the way the web-client works is that it connects with a web-socket connection to one of OpenAudio's "relay" servers. These servers are responsible for forwarding "commands" from the Minecraft server to the correct web-client. I do it like this so I can take responsibility for authentication and routing, and my end-users don't have to setup any complicated network configuration on their end. (this is grocely simplified, but you get the idea)

Let's take ImagineFun as an example, when you walk into a shop that has ambiance music, the Minecraft server detects that there should be media playing at your current location. If it sees you have a client open, then it will send a "media create request" that flows through the relay server, and eventually reaches your web-client, which then starts streaming the media from the provided URL.

These requests are pretty simple, and come down to "play a new sound, give it this ID, use these settings. You can download the file here and start playing it".

This is a pretty simple flow, and haves some advantages because of it's simplicity such as

- Low latency (the client fetches the media directly)

- Simple caching (the media URL never changes, so the browser can cache it as it pleases)

- Cheap on OA's end (the media is fetched directly from the CDN, and doesn't have to go through OA's servers)

- We do offer our own CDN and proxy nowadays as well, but it's not required to use OA, and many of our users use their own hosting or other third-party CDNs.

But this simplicity is also the major weakness of this approach, because it means that anyone can just take the media URL and use it in their own projects, or even share it with others. This may not sound like a big deal, but it can lead to bandwidth theft or "leeching", where people use the media without permission, which can lead to increased costs for OA and a degraded experience for our users.

There have even been cases where unannounced projects have been "leaked" through the audio client, because the client could be seen pre-loading /audio/unreleased/secretshow.mp3 or something like that, which is a pretty big deal for some of our users.

To solve this issue, we needed to implement a way to protect the media URLs, and ensure that only authorized clients can access them. The solution we came up with is to use asymmetric encryption. But there's actually some nuance to this that makes it interesting, so let's dive into how it works.

Optimistic: why not just encrypt the media URL as-is?

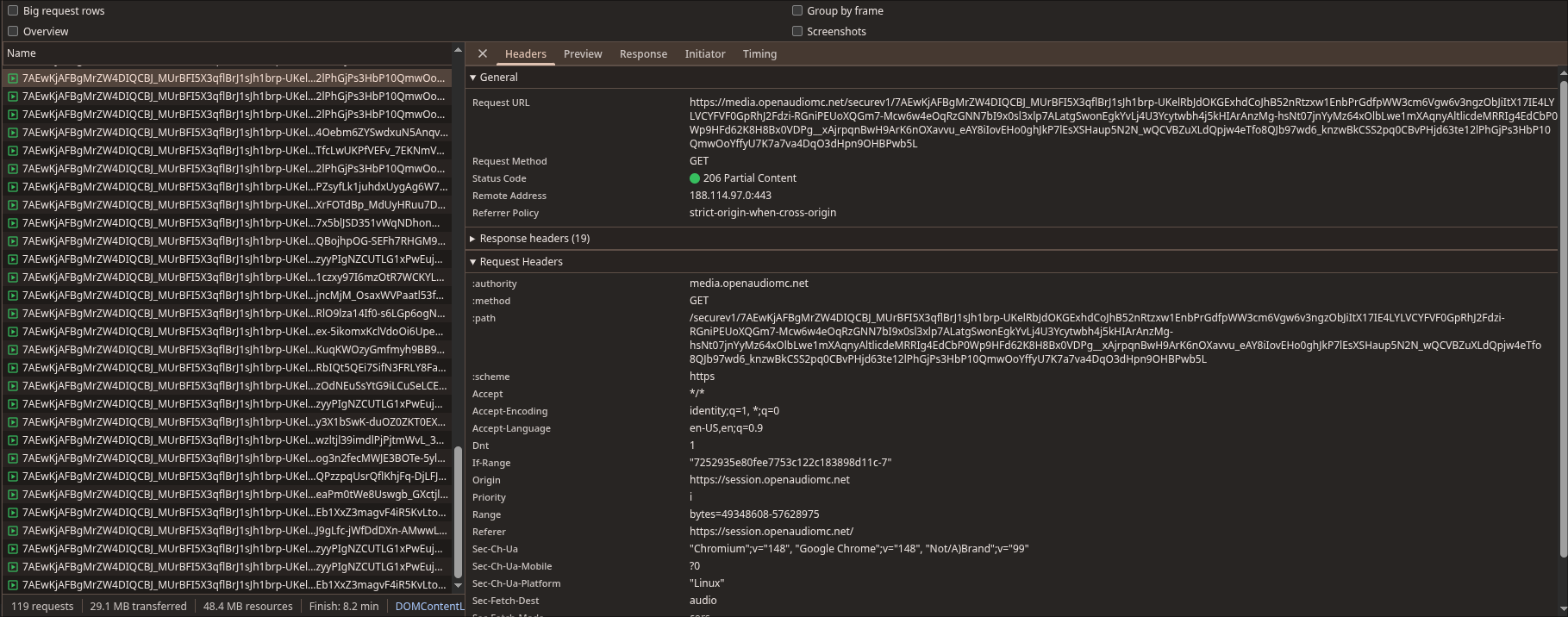

It's pretty clear that we need a reverse proxy at the end of the chain, that contacts the CDN on behalf of the client, and serves the media to the client, so we can add some authentication and authorization logic there. The tricky part is how to securely send the media URL to the client, in a way that the proxy can still understand it, but unauthorized parties in the middle cannot.

The first idea that comes to mind is to just encrypt the media URL as-is, using a symmetric encryption algorithm like AES, and then send the encrypted URL to the client. The client would then pass that on to the proxy, which would decrypt it and fetch the media from the CDN. This is a pretty straightforward solution, and would successfully protect the media URL from unauthorized access, but it doesn't fully solve our problems. One of the issues we sought to solve is "leeching", where people share the media URL with others, which can lead to increased costs for OA and a degraded experience for our users. If we just encrypt the URL, then anyone who has the encrypted URL can share it with others, and they would still be able to access the media.

Well, that still sounds easy enough right? Why not include some metadata like the expected client ID (which the proxy can verify), and a timestamp that defines when the link should "expire"? This would prevent leaching, because it allows us to do integrity checks and straight up invalidate the link after a certain amount of time and it would prevent leaking (because the client cannot read the CDN target itself).

Congratulations, you made the problem worse!

Wait, how?! didn't we solve leaching and leaking?

Well, yes.

But this is a great example of a solution that fixes the symptoms, but not the root cause. One of our goals was to prevent "leeching" with the goal of reducing costs and used bandwidth by unauthorized users, but in doing so, we accidentally made the problem worse.

The expiration timestamp is a "moving" parameter, which will be different for every request. This may only be a difference of a few bytes, but thanks to the nature of encryption, this will result in a completely different encrypted URL for every request, which means that the browser can no longer cache the media effectively, which leads to increased bandwidth usage and costs for OA, which is the exact opposite of what we wanted to achieve. (not even mentioning the degraded experience for our users, because they can no longer benefit from caching)

Time windows

The core problem is that the expiry timestamp moves with every second that passes. Even if all other fields in the payload are identical, a timestamp that changes every second means the encrypted output changes every second, because encryption turns even a one-bit difference in input into a completely different output.

What we need is a timestamp that stays stable for a meaningful period, but still expires predictably. The answer is to stop thinking about expiry as a point in time, and start thinking about it as a window of time.

Instead of asking "when does this link expire?", we ask "which window does this link belong to?"

If we divide the timeline into fixed buckets of N seconds, every request that happens inside the same bucket will produce the same window index. The encrypted payload becomes identical across all requests in that window, which means the browser can cache it freely. When the window rolls over, the index increments, the payload changes, and the old link stops working.

Here is what that looks like on a timeline with 30-minute windows:

The window boundary is the only moment the URL changes. Inside a window, it is completely stable, so the browser cache behaves exactly as it did in the legacy flow.

The implementation is two lines:

func computeValidUntil(lifetimeSeconds int) (validUntil int64, windowIndex int64) { now := time.Now().Unix() windowIndex = now / int64(lifetimeSeconds) validUntil = (windowIndex + 1) * int64(lifetimeSeconds) return }

windowIndex is just integer division of the current Unix timestamp by the window size. Every second inside a 30-minute window divides to the same integer. validUntil is the next boundary, which is the actual expiry the proxy checks against.

What goes inside the encrypted payload

The full payload that gets encrypted and embedded in the URL looks like this:

type EncryptMediaCommandPayload struct { OriginalSource string `json:"originalSource"` // the real CDN URL, hidden from the client ValidUntilUnix int `json:"validUntilUnix"` // unix timestamp of the next window boundary AcceptedClient string `json:"client"` // the client ID this link was issued to BucketFolder string `json:"bucketFolder,omitempty"` WindowIndex int64 `json:"windowIndex"` // which window this was issued in LifetimeSeconds int `json:"lifetimeSeconds"` // window size, so the proxy can validate without shared config }

A concrete example for a 30-minute window issued at 12:34:07:

{ "originalSource": "https://static.imaginefun.net/audio/rides/bigthunder.mp3", "validUntilUnix": 1778832000, "client": "https://imaginefun.openaudiomc.net", "windowIndex": 989351, "lifetimeSeconds": 1800 }

This entire JSON blob gets encrypted with X25519 key exchange and AES-256-GCM, and the result is base64-encoded into the URL:

https://media.openaudiomc.net/securev1/EC<encrypted bytes...>

The client receives this URL and knows nothing about what is inside it. It cannot read the original source, the expiry, or the window index. It just holds a token.

How the proxy validates it

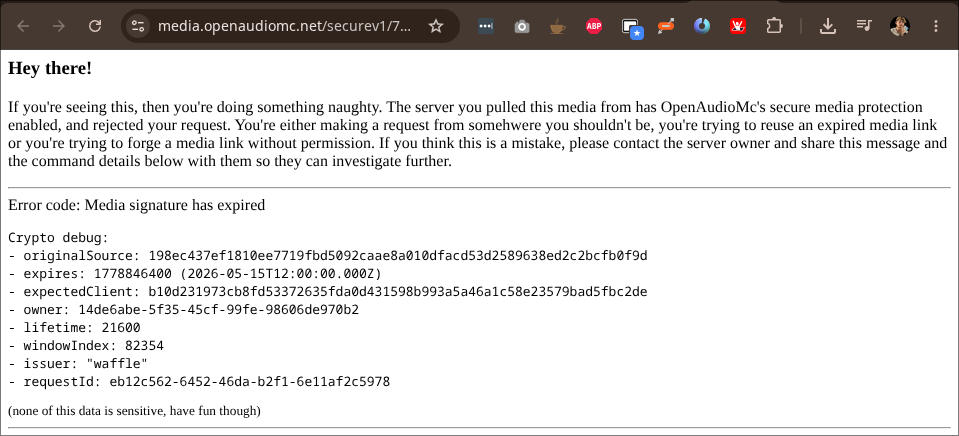

When the client requests media, it passes this URL to the Cloudflare Worker. The worker decrypts the payload with the private key (which only the worker holds), and then runs three checks:

function isMediaTokenValid( payload: EncryptMediaCommandPayload, currentClient: string ): boolean { const nowSecs = Math.floor(Date.now() / 1000); const lifetimeSecs = payload.lifetimeSeconds; // 1. Hard expiry check if (nowSecs > payload.validUntilUnix) return false; // 2. Window drift check — allow at most one window of drift to cover // the edge case where a token was issued just before a boundary // but validated just after const expectedWindow = Math.floor(nowSecs / lifetimeSecs); const drift = expectedWindow - payload.windowIndex; if (drift < 0 || drift > 1) return false; // 3. Client binding check if (payload.client !== currentClient) return false; return true; }

The window drift check is the interesting one. A token issued at 12:59:59 has windowIndex = N and validUntilUnix pointing at 13:00:00. If the client requests media one second later at 13:00:01, the expected window is now N+1, giving a drift of 1, which is still accepted. A drift of 2 or more means the token is from a previous window entirely and gets rejected, even if validUntilUnix somehow still passes (which it would not in practice, but the defense-in-depth is worth having).

The window drift allowance of 1 is the only edge case accommodation. A stolen token from yesterday cannot be replayed today because the drift would be enormous, and a shared token from a different client cannot be replayed because the AcceptedClient check would fail.

The caching story, resolved

With windows in place, the URL lifecycle looks like this for a 30-minute window:

12:00:00— first request in the window. Relay encrypts the payload, caches the resulting URL keyed onsource + client + windowIndex. Cache miss, one encryption operation.12:01:30— second request for the same source. Window index is still the same, so the relay returns the cached URL. No encryption. Browser also gets a cache hit if it has seen this URL before.12:30:00— window boundary. Window index increments. Next request is a cache miss, a new URL is produced, and the old one will expire within seconds.

The relay-side cache is just a sync.Map keyed on the combination of source, client, and window index:

cacheKey := fmt.Sprintf("%s|%s|%d", originalSource, clientBaseUri, windowIndex)

The result is that encryption only runs once per window per unique source, and the browser cache behaves exactly as it did in the legacy flow for the duration of that window. The cost of protection is at most one extra encryption per window boundary, which happens at most once every N minutes depending on the configured lifetime.

And the final banger; all of this was a transparent change for end users. This requires 0 changes in the client or plugin. This is a great example of how to retrofit a security solution into an existing system without breaking anything, by carefully designing the new flow to be compatible with the old one, and only changing the internals of the relay and proxy. The client just gets a URL as before, but now it's a secure token instead of a plain CDN link.

Final integration